Better Parameter-Free Stochastic Optimization with ODE Updates for Coin-Betting

Keyi Chen, John Langford, Francesco Orabona

[AAAI-22] Main Track

Abstract:

Parameter-free stochastic gradient descent (PFSGD) algorithms do not require setting learning rates while achieving optimal theoretical performance. In practical applications, however, there remains an empirical gap between tuned stochastic gradient descent (SGD) and PFSGD. In this paper, we close the empirical gap with a new parameter-free algorithm based on continuous-time Coin-Betting on truncated models. The new update is derived through the solution of an Ordinary Differential Equation (ODE) and solved in a closed form. We show empirically that this new parameter-free algorithm outperforms algorithms with the ``best default'' learning rates and almost matches the performance of finely tuned baselines without anything to tune.

Introduction Video

Sessions where this paper appears

-

Poster Session 5

Sat, February 26 12:45 AM - 2:30 AM (+00:00)

Sat, February 26 12:45 AM - 2:30 AM (+00:00)

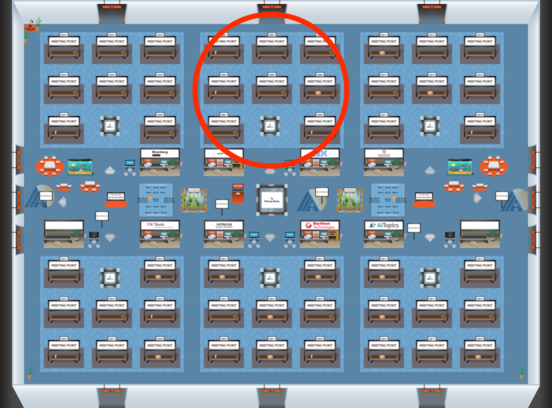

Blue 2

Blue 2

-

Poster Session 10

Sun, February 27 4:45 PM - 6:30 PM (+00:00)

Sun, February 27 4:45 PM - 6:30 PM (+00:00)

Blue 2

Blue 2