CC-Cert: A Probabilistic Approach to Certify General Robustness of Neural Networks

Mikhail Pautov, Nurislam Tursynbek, Marina Munkhoeva, Nikita Muravev, Aleksandr Petiushko, Ivan Oseledets

[AAAI-22] Main Track

Abstract:

In safety-critical machine learning applications, it is crucial to defend models against adversarial attacks --- small modifications of the input that change the predictions. Besides rigorously studied $\ell_p$-bounded additive perturbations, semantic perturbations (e.g. rotation, translation) raise a serious concern on deploying ML systems in real-world. Therefore, it is important to provide provable guarantees for deep learning models against semantically meaningful input transformations. In this paper, we propose a new universal probabilistic certification approach based on Chernoff-Cramer bounds that can be used in general attack settings. We estimate the probability of a model to fail if the attack is sampled from a certain distribution. Our theoretical findings are supported by experimental results on different datasets.

Introduction Video

Sessions where this paper appears

-

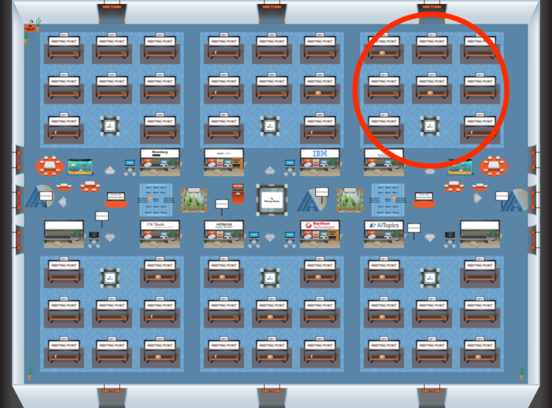

Poster Session 6

Blue 3

Blue 3

-

Poster Session 7

Blue 3

Blue 3