Efficient Decentralized Stochastic Gradient Descent Method for Nonconvex Finite-Sum Optimization Problems

Wenkang Zhan, Gang Wu, Hongchang Gao

[AAAI-22] Main Track

Abstract:

Decentralized stochastic gradient descent methods have attracted increasing interest in recent years. Numerous methods have been proposed for the nonconvex finite-sum optimization problem. However, existing methods have a large sample complexity, which slows down the empirical convergence speed. To address this issue, in this paper, we proposed a novel decentralized stochastic gradient descent method for the nonconvex finite-sum optimization problem, which enjoys a better sample and communication complexity than existing methods. Importantly, these complexities are independent of the network topology of the decentralized training system. To the best of our knowledge, our work is the first one achieving such favorable sample and communication complexities. Finally, we have conducted extensive experiments and the experimental results have confirmed the superior performance of our proposed method.

Introduction Video

Sessions where this paper appears

-

Poster Session 3

Fri, February 25 8:45 AM - 10:30 AM (+00:00)

Fri, February 25 8:45 AM - 10:30 AM (+00:00)

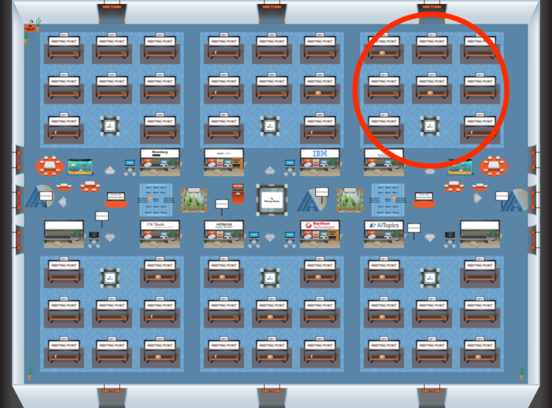

Blue 3

Blue 3

-

Poster Session 8

Sun, February 27 12:45 AM - 2:30 AM (+00:00)

Sun, February 27 12:45 AM - 2:30 AM (+00:00)

Blue 3

Blue 3