Deep Neural Networks Learn Meta-Structures from Noisy Labels in Semantic Segmentation

Yaoru Luo, Guole Liu, Yuanhao Guo, Ge Yang

[AAAI-22] Main Track

Abstract:

How deep neural networks (DNNs) learn from noisy labels has been studied extensively in image classification but much less in image segmentation. So far, our understanding of the learning behavior of DNNs trained by noisy segmentation labels remains limited. In this study, we address this deficiency in both binary segmentation of biological microscopy images and multi-class segmentation of natural images. We generate extremely noisy labels by randomly sampling a small fraction (e.g., 10%) or flipping a large fraction (e.g., 90%) of the ground truth labels. When trained with these noisy labels, DNNs provide largely the same segmentation performance as trained by the original ground truth. This indicates that DNNs learn structures hidden in labels rather than pixel-level labels per se in their supervised training for semantic segmentation. We refer to these hidden structures in labels as meta-structures. When DNNs are trained by labels with different perturbations to the meta-structure, we find consistent degradation in their segmentation performance. In contrast, incorporation of meta-structure information substantially improves performance of an unsupervised segmentation model developed for binary semantic segmentation. We define meta-structures mathematically as spatial density distributions and show both theoretically and experimentally how this formulation explains key observed learning behavior of DNNs.

Introduction Video

Sessions where this paper appears

-

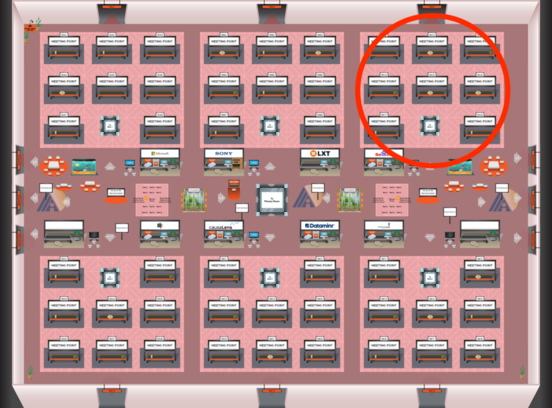

Poster Session 4

Fri, February 25 5:00 PM - 6:45 PM (+00:00)

Fri, February 25 5:00 PM - 6:45 PM (+00:00)

Red 3

Red 3

-

Poster Session 8

Sun, February 27 12:45 AM - 2:30 AM (+00:00)

Sun, February 27 12:45 AM - 2:30 AM (+00:00)

Red 3

Red 3

-

Oral Session 8

Sun, February 27 2:30 AM - 3:45 AM (+00:00)

Sun, February 27 2:30 AM - 3:45 AM (+00:00)

Red 3

Red 3