Unsupervised Representation for Semantic Segmentation by Implicit Cycle-Attention Contrastive Learning

Bo Pang, Yizhuo Li, Yifan Zhang, Gao Peng, Jiajun Tang, Kaiwen Zha, Jiefeng Li, Cewu Lu

[AAAI-22] Main Track

Abstract:

We study the unsupervised representation learning for the semantic segmentation task. Different from previous works that aim at providing unsupervised pre-trained backbones for segmentation models which need further supervised fine-tune, here, we focus on providing representation that is only trained by unsupervised methods. This means models need to directly generate pixel-level, linearly separable semantic results. We first explore and present two factors that have significant effects on segmentation under the contrastive learning framework: 1) the difficulty and diversity of the positive contrastive pairs, 2) the balance of global and local features. With the intention of optimizing these factors, we propose the cycle-attention contrastive learning (CACL). CACL makes use of semantic continuity of video frames, adopting unsupervised cycle-consistent attention mechanism to implicitly conduct contrastive learning with difficult, global-local-balanced positive pixel pairs. Compared with baseline model MoCo-v2 and other unsupervised methods, CACL demonstrates consistently superior performance on PASCAL VOC (+4.5 mIoU) and Cityscapes (+4.5 mIoU) datasets.

Introduction Video

Sessions where this paper appears

-

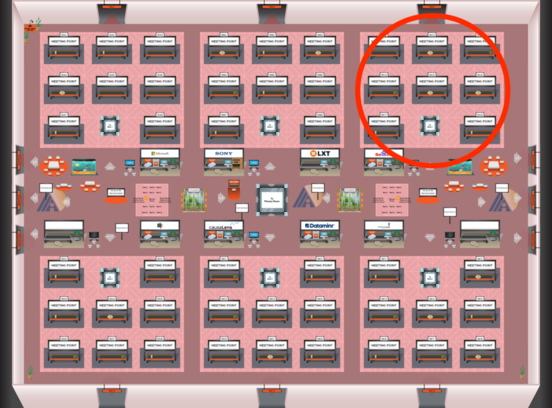

Poster Session 1

Thu, February 24 4:45 PM - 6:30 PM (+00:00)

Thu, February 24 4:45 PM - 6:30 PM (+00:00)

Red 3

Red 3

-

Poster Session 11

Mon, February 28 12:45 AM - 2:30 AM (+00:00)

Mon, February 28 12:45 AM - 2:30 AM (+00:00)

Red 3

Red 3