Contrastive Quantization with Code Memory for Unsupervised Image Retrieval

Jinpeng Wang, Ziyun Zeng, Bin Chen, Tao Dai, Shu-Tao Xia

[AAAI-22] Main Track

Abstract:

The high efficiency in computation and storage makes hashing (including binary hashing and quantization) a common strategy in large-scale retrieval systems. To alleviate the reliance on expensive annotations, unsupervised deep hashing becomes an important research problem. This paper provides a novel solution to unsupervised deep quantization, namely Contrastive Quantization with Code Memory (MeCoQ). Different from existing reconstruction-based strategies, we learn unsupervised binary descriptors by contrastive learning, which can better capture discriminative visual semantics. Besides, we uncover that codeword diversity regularization is critical to prevent contrastive learning-based quantization from model degeneration. Moreover, we introduce a novel quantization code memory module that boosts contrastive learning with lower feature drift than conventional feature memories. Extensive experiments on benchmark datasets show that MeCoQ outperforms state-of-the-art methods.

Introduction Video

Sessions where this paper appears

-

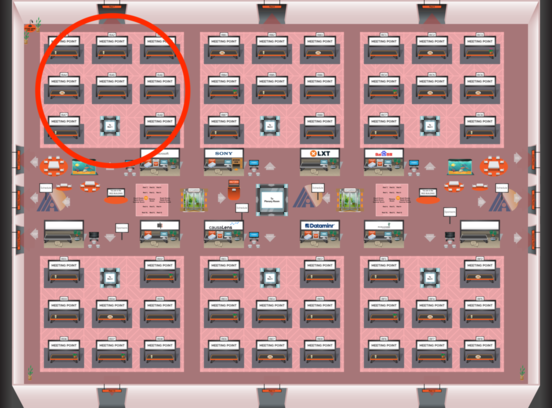

Poster Session 3

Fri, February 25 8:45 AM - 10:30 AM (+00:00)

Fri, February 25 8:45 AM - 10:30 AM (+00:00)

Red 1

Red 1

-

Poster Session 8

Sun, February 27 12:45 AM - 2:30 AM (+00:00)

Sun, February 27 12:45 AM - 2:30 AM (+00:00)

Red 1

Red 1

-

Oral Session 3

Fri, February 25 10:30 AM - 11:45 AM (+00:00)

Fri, February 25 10:30 AM - 11:45 AM (+00:00)

Red 1

Red 1