On the Computation of Necessary and Sufficient Explanations

Adnan Darwiche, Chunxi Ji

[AAAI-22] Main Track

Abstract:

For Boolean classifiers, the {\em complete reason} behind a decision is a Boolean formula that characterizes why the decision was made. This recently introduced notion has a number of applications, which include generating explanations, detecting decision bias and evaluating counterfactual queries. Prime {\em implicants} of the complete reason are known as {\em sufficient reasons} for the decision and they correspond to what is known as PI explanations and abductive explanations. In this paper, we refer to the prime {\em implicates} of a complete reason as {\em necessary reasons} for the decision. We justify this terminology semantically and show that necessary reasons correspond to what is known as contrastive explanations. We also study the computation of complete reasons for multi-class decision trees and graphs with nominal and numeric features for which we derive efficient, closed-form complete reasons. We further investigate the computation of shortest necessary and sufficient reasons for a broad class complete reasons, which include the derived closed forms and the complete reasons for Sentential Decision Diagrams (SDDs). We provide an algorithm which can enumerate their shortest necessary reasons in output polynomial time. Enumerating shortest sufficient reasons for this class of complete reasons is hard even for a single reason. For this problem, we provide an algorithm that appears to be quite efficient as we show empirically.

Introduction Video

Sessions where this paper appears

-

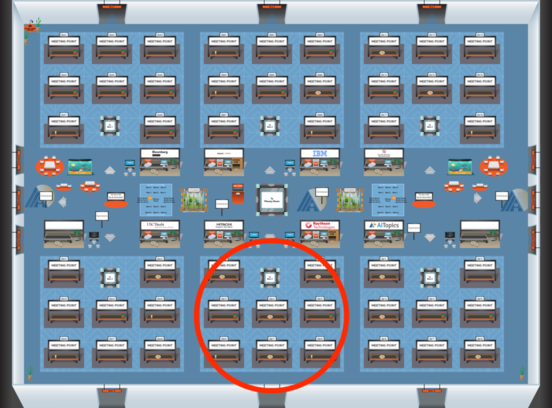

Poster Session 4

Fri, February 25 5:00 PM - 6:45 PM (+00:00)

Fri, February 25 5:00 PM - 6:45 PM (+00:00)

Blue 5

Blue 5

-

Poster Session 8

Sun, February 27 12:45 AM - 2:30 AM (+00:00)

Sun, February 27 12:45 AM - 2:30 AM (+00:00)

Blue 5

Blue 5