Exploring Visual Context for Weakly Supervised Person Search

Yichao Yan, Jinpeng Li, Shengcai Liao, Jie Qin, Bingbing Ni, Ke Lu, Xiaokang Yang

[AAAI-22] Main Track

Abstract:

Person search has recently emerged as a challenging task that jointly addresses pedestrian detection and person re-identification. Existing approaches follow a fully supervised setting where both bounding box and identity annotations are available. However, annotating identities is labor-intensive, limiting the practicability and scalability of current frameworks. This paper inventively considers weakly supervised person search with only bounding box annotations. We propose to address this novel task by investigating three levels of context clues (i.e., detection, memory and scene) in unconstrained natural images. The first two are employed to promote local and global discriminative capabilities, while the latter enhances clustering accuracy. Despite its simple design, our CGPS boosts the baseline model by 8.8% in mAP on CUHK-SYSU. Surprisingly, it even achieves comparable performance with several supervised person search models. The codes are available in the supplementary materials and will be released.

Introduction Video

Sessions where this paper appears

-

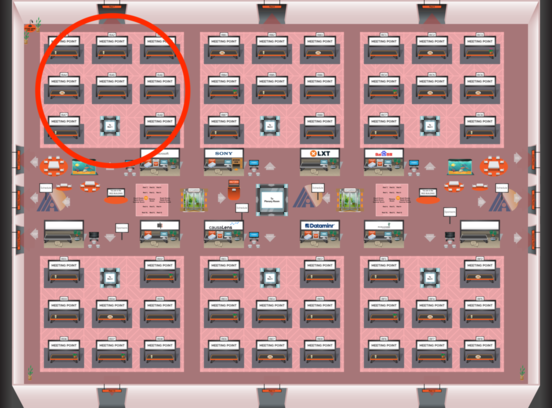

Poster Session 3

Fri, February 25 8:45 AM - 10:30 AM (+00:00)

Fri, February 25 8:45 AM - 10:30 AM (+00:00)

Red 1

Red 1

-

Poster Session 8

Sun, February 27 12:45 AM - 2:30 AM (+00:00)

Sun, February 27 12:45 AM - 2:30 AM (+00:00)

Red 1

Red 1