A Fusion-Denoising Attack on InstaHide with Data Augmentation

Xinjian Luo, Xiaokui Xiao, Yuncheng Wu, Juncheng Liu, Beng Chin Ooi

[AAAI-22] Main Track

Abstract:

InstaHide is a state-of-the-art mechanism for protecting private training images, which works by mixing multiple private images and modifying them such that their visual features are indistinguishable to the naked eye. In recent work, however, Carlini et al. show that it is possible to reconstruct private images from the encrypted dataset generated by InstaHide. Nevertheless, we demonstrate that Carlini et al.'s attack can be easily defeated by incorporating data augmentation into InstaHide. This leads to a natural question: is InstaHide with data augmentation secure?

In this paper, we provide a negative answer to this question, by presenting an attack for recovering private images from the outputs of InstaHide even when data augmentation is present.

The basic idea of our attack is to use a comparative network to identify encrypted images that are likely to correspond to the same private image, and then employ a fusion-denoising network for restoring the private image from the encrypted ones, taking into account the effects of data augmentation.

Extensive experiments demonstrate the effectiveness of the proposed attack in comparison to Carlini et al.'s attack.

In this paper, we provide a negative answer to this question, by presenting an attack for recovering private images from the outputs of InstaHide even when data augmentation is present.

The basic idea of our attack is to use a comparative network to identify encrypted images that are likely to correspond to the same private image, and then employ a fusion-denoising network for restoring the private image from the encrypted ones, taking into account the effects of data augmentation.

Extensive experiments demonstrate the effectiveness of the proposed attack in comparison to Carlini et al.'s attack.

Introduction Video

Sessions where this paper appears

-

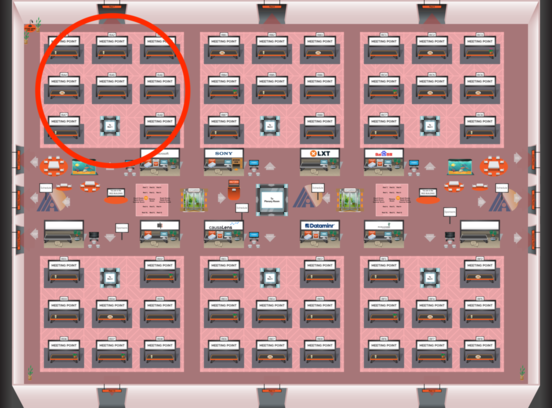

Poster Session 2

Fri, February 25 12:45 AM - 2:30 AM (+00:00)

Fri, February 25 12:45 AM - 2:30 AM (+00:00)

Red 1

Red 1

-

Poster Session 12

Mon, February 28 8:45 AM - 10:30 AM (+00:00)

Mon, February 28 8:45 AM - 10:30 AM (+00:00)

Red 1

Red 1