Rendering-Aware HDR Environment Map Prediction From a Single Image

Jun-Peng Xu, Chen-Yu Zuo, Fang-Lue Zhang, Miao Wang

[AAAI-22] Main Track

Abstract:

High dynamic range (HDR) illumination estimation from a single low dynamic range (LDR) image is a significant task in computer vision, graphics, and augmented reality. We present a two-stage deep learning-based method to predict an HDR environment map from a single narrow field-of-view LDR image. We first learn a hybrid parametric representation that sufficiently covers high- and low-frequency illumination components in the environment. Taking the estimated illuminations as guidance, we build a generative adversarial network to synthesize an HDR environment map that enables realistic rendering effects. We specifically consider the rendering effect by supervising the networks using rendering losses in both stages, on the predicted environment map as well as the hybrid illumination representation. Quantitative and qualitative experiments demonstrate that our approach achieves lower relighting errors for virtual object insertion and is preferred by users compared to state-of-the-art methods.

Introduction Video

Sessions where this paper appears

-

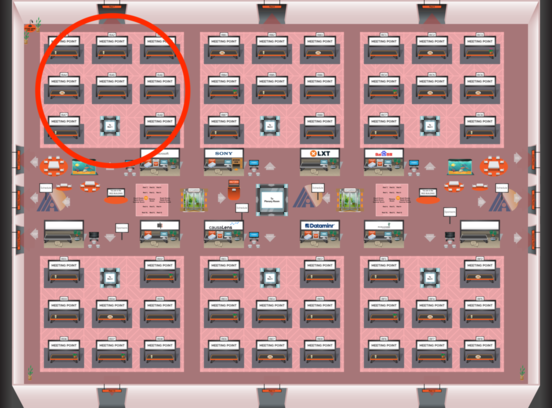

Poster Session 2

Fri, February 25 12:45 AM - 2:30 AM (+00:00)

Fri, February 25 12:45 AM - 2:30 AM (+00:00)

Red 1

Red 1

-

Poster Session 12

Mon, February 28 8:45 AM - 10:30 AM (+00:00)

Mon, February 28 8:45 AM - 10:30 AM (+00:00)

Red 1

Red 1

-

Oral Session 2

Fri, February 25 2:30 AM - 3:45 AM (+00:00)

Fri, February 25 2:30 AM - 3:45 AM (+00:00)

Red 1

Red 1