Enhancing Counterfactual Classification Performance via Self-Training

Ruijiang Gao, Max Biggs, Wei Sun, Ligong Han

[AAAI-22] Main Track

Abstract:

Unlike traditional supervised learning, in many settings only partial feedback is available. We may only observe outcomes for the chosen actions, but not the counterfactual outcomes associated with other alternatives. Such settings encompass a wide variety of applications including pricing, online marketing and precision medicine. A key challenge is that observational data are influenced by historical policies deployed in the system, yielding a biased data distribution. We approach this task as a domain adaptation problem and propose a self-training algorithm which imputes outcomes with \textit{categorical} values for \textit{finite} unseen actions in the observational data to simulate a randomized trial through pseudolabelling, which we refer to as Counterfactual Self-Training (CST). CST iteratively imputes pseudolabels and retrains the model. In addition, we show input consistency loss can further improve CST performance which is shown in recent theoretical analysis of pseudolabelling. We demonstrate the effectiveness of the proposed algorithms on both synthetic and real datasets.

Introduction Video

Sessions where this paper appears

-

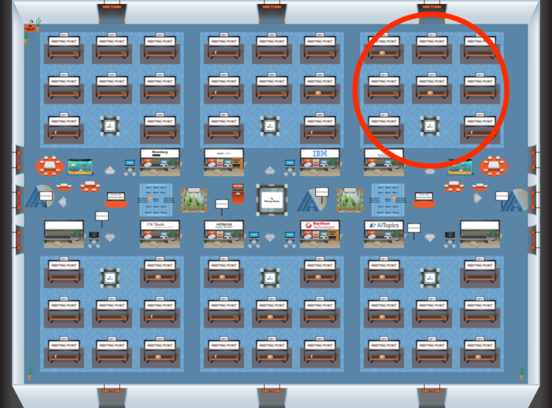

Poster Session 4

Fri, February 25 5:00 PM - 6:45 PM (+00:00)

Fri, February 25 5:00 PM - 6:45 PM (+00:00)

Blue 3

Blue 3

-

Poster Session 9

Sun, February 27 8:45 AM - 10:30 AM (+00:00)

Sun, February 27 8:45 AM - 10:30 AM (+00:00)

Blue 3

Blue 3