Longitudinal Fairness with Censorship

Wenbin Zhang, Jeremy C Weiss

[AAAI-22] AI for Social Impact Track

Abstract:

Recent works in artificial intelligence fairness attempt to mitigate discrimination by proposing constrained optimization programs that achieve parity for some fairness statistic. Most assume availability of the class label, which is impractical in many real-world applications such as precision medicine, actuarial analysis and recidivism prediction. Here we consider fairness in longitudinal right-censored environments, where the time to event might be unknown, resulting in censorship of the class label and inapplicability of existing fairness studies. We devise applicable fairness measures, propose a debiasing algorithm to bridge fairness with and without censorship, and provide necessary theoretical constructs for these important and socially-sensitive tasks. Our experiments on four censored datasets confirm the utility of our approach.

Introduction Video

Sessions where this paper appears

-

Poster Session 5

Sat, February 26 12:45 AM - 2:30 AM (+00:00)

Sat, February 26 12:45 AM - 2:30 AM (+00:00)

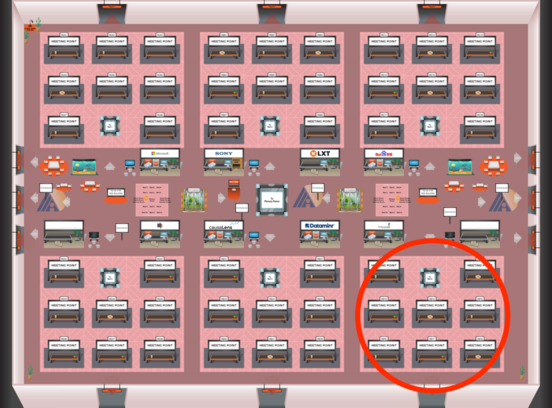

Red 6

Red 6

-

Poster Session 12

Mon, February 28 8:45 AM - 10:30 AM (+00:00)

Mon, February 28 8:45 AM - 10:30 AM (+00:00)

Red 6

Red 6