Wasserstein Adversarial Transformer for Cloud Workload Prediction

Shivani Arbat, Vinod K. Jayakumar, Jaewoo Lee, Wei Wang, In Kee Kim

[IAAI-22] Emerging Applications of AI

Abstract:

Predictive VM (Virtual Machine) auto-scaling is a promising technique to optimize cloud applications’ operating costs and performance. Understanding the job arrival rate is crucial for accurately predicting future changes in cloud workloads and proactively provisioning and de-provisioning VMs for hosting the applications. However, developing a model that accurately predicts cloud workload changes is extremely challenging due to the dynamic nature of cloud workloads. Long- Short-Term-Memory (LSTM) models have been developed for cloud workload prediction. Unfortunately, the state-of-the-art LSTM model leverages recurrences to predict, which naturally adds complexity and increases the inference overhead as input sequences grow longer. To develop a cloud workload prediction model with high accuracy and low inference overhead, this work presents a novel time-series forecasting model called WGAN-gp Transformer, inspired by the Transformer network and improved Wasserstein-GANs. The proposed method adopts a Transformer network as a generator and a multi-layer perceptron as a critic. The extensive evaluations with real-world workload traces show WGAN- gp Transformer achieves 5× faster inference time with up to 5.1% higher prediction accuracy against the state-of-the-art. We also apply WGAN-gp Transformer to auto-scaling mechanisms on Google cloud platforms, and the WGAN-gp Transformer-based auto-scaling mechanism outperforms the LSTM-based mechanism by significantly reducing VM over-provisioning and under-provisioning rates.

Introduction Video

Sessions where this paper appears

-

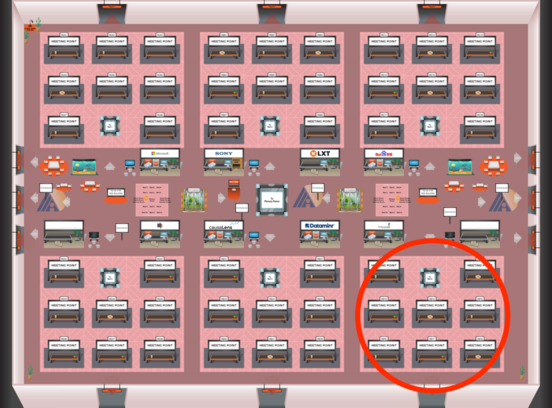

Poster Session 9

Sun, February 27 8:45 AM - 10:30 AM (+00:00)

Sun, February 27 8:45 AM - 10:30 AM (+00:00)

Red 6

Red 6

-

Poster Session 7

Sat, February 26 4:45 PM - 6:30 PM (+00:00)

Sat, February 26 4:45 PM - 6:30 PM (+00:00)

Red 6

Red 6