Code Representation Learning Using Prüfer Sequences (Student Abstract)

Tenzin Jinpa, Yong Gao

[AAAI-22] Student Abstract and Poster Program

Abstract:

An effective and efficient encoding of the source code of a computer program is critical to the success of sequence-to-sequence deep neural network models for code representation learning. In this study, we propose to use the Prufer sequence of the Abstract Syntax Tree (AST) of a computer program to design a sequential representation scheme that preserves the structural information in an AST. Our representation makes it possible to develop deep-learning models in which signals carried by lexical tokens in the training examples can be exploited automatically and selectively based on their syntactic role and importance. Unlike other recently-proposed approaches, our representation is concise and lossless in terms of the structural information of the AST. Results from our experiment show that prufer-sequence-based representation is indeed highly effective and efficient.

Sessions where this paper appears

-

Poster Session 1

Thu, February 24 4:45 PM - 6:30 PM (+00:00)

Thu, February 24 4:45 PM - 6:30 PM (+00:00)

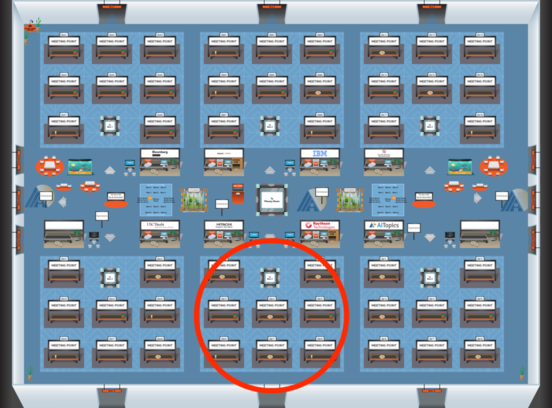

Blue 5

Blue 5

-

Poster Session 11

Mon, February 28 12:45 AM - 2:30 AM (+00:00)

Mon, February 28 12:45 AM - 2:30 AM (+00:00)

Blue 5

Blue 5