FedSoft: Soft Clustered Federated Learning with Proximal Local Updating

Yichen Ruan, Carlee Joe-Wong

[AAAI-22] Main Track

Abstract:

Traditionally, clustered federated learning groups clients with the same data distribution into a cluster, so that every client is uniquely associated with one data distribution and helps train a model for this distribution. We relax this hard association assumption to soft clustered federated learning, which allows every local dataset to follow a mixture of multiple source distributions. We propose FedSoft, which trains both locally personalized models and high-quality cluster models in this setting. FedSoft limits client workload by using proximal updates to require the completion of only one optimization task from a subset of clients in every communication round. We show, analytically and empirically, that FedSoft effectively exploits similarities between the source distributions to learn personalized and cluster models that perform well.

Introduction Video

Sessions where this paper appears

-

Poster Session 5

Sat, February 26 12:45 AM - 2:30 AM (+00:00)

Sat, February 26 12:45 AM - 2:30 AM (+00:00)

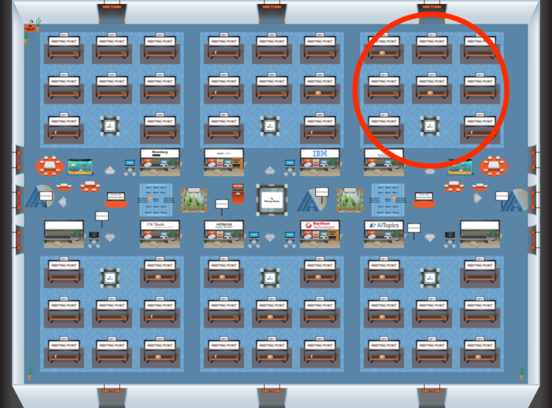

Blue 3

Blue 3

-

Poster Session 10

Sun, February 27 4:45 PM - 6:30 PM (+00:00)

Sun, February 27 4:45 PM - 6:30 PM (+00:00)

Blue 3

Blue 3

-

Oral Session 10

Sun, February 27 6:30 PM - 7:45 PM (+00:00)

Sun, February 27 6:30 PM - 7:45 PM (+00:00)

Blue 3

Blue 3