VAST: The Valence-Assessing Semantics Test for Contextualizing Language Models

Robert Wolfe, Aylin Caliskan

[AAAI-22] Main Track

Abstract:

We introduce VAST, the Valence-Assessing Semantics Test, a novel intrinsic evaluation task for contextualized word embeddings (CWEs). Despite the widespread use of contextualizing language models (LMs), researchers have no intrinsic evaluation task for understanding the semantic quality of CWEs and their unique properties as related to contextualization, the change in the vector representation of a word based on surrounding words; tokenization, the breaking of uncommon words into subcomponents; and LM-specific geometry learned during training. VAST uses valence, the association of a word with pleasantness, to measure the correspondence of word-level LM semantics with widely used human judgments, and examines the effects of contextualization, tokenization, and LM-specific geometry. Because prior research has found that CWEs from OpenAI's 2019 English-language causal LM GPT-2 perform poorly on other intrinsic evaluations, we select GPT-2 as our primary subject, and include results showing that VAST is useful for 7 other LMs, and can be used in 7 languages. GPT-2 results show that the semantics of a word are more similar to the semantics of context in layers closer to model output, such that VAST scores diverge between our contextual settings, ranging from Pearson’s rho of .55 to .77 in layer 11. We also show that multiply tokenized words are not semantically encoded until layer 8, where they achieve Pearson’s rho of .46, indicating the presence of an encoding process for multiply tokenized words which differs from that of singly tokenized words, for which rho is highest in layer 0. We find that a few neurons with values having greater magnitude than the rest mask word-level semantics in GPT-2’s top layer, but that word-level semantics can be recovered by nullifying non-semantic principal components: Pearson’s rho in the top layer improves from .32 to .76. Downstream POS tagging and sentence classification experiments indicate that the GPT-2 uses these principal components for non-semantic purposes, such as to represent sentence-level syntax relevant to next-word prediction. After isolating semantics, we show the utility of VAST for understanding LM semantics via improvements over related work on four word similarity tasks, with a score of .50 on SimLex-999, better than the previous best of .45 for GPT-2. Finally, we show that 8 of 10 WEAT bias tests, which compare differences in word embedding associations between groups of words, exhibit more stereotype-congruent biases after isolating semantics, indicating that non-semantic structures in LMs also mask social biases.

Introduction Video

Sessions where this paper appears

-

Poster Session 3

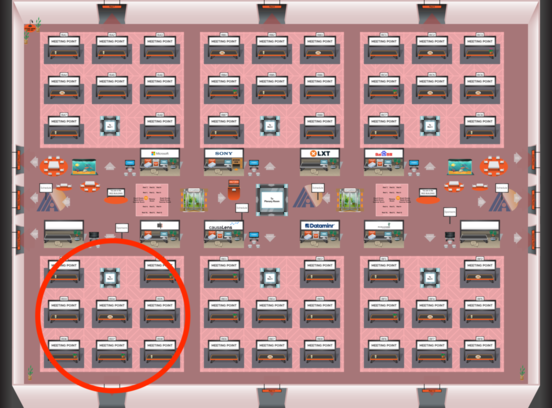

Red 4

Red 4

-

Poster Session 12

Red 4

Red 4