Natural Black-Box Adversarial Examples Against Deep Reinforcement Learning

Mengran Yu, Shiliang Sun

[AAAI-22] Main Track

Abstract:

Black-box attacks in deep reinforcement learning usually retrain substitute policies to mimic behaviors of target policies as well as craft adversarial examples, and attack the target policies with these transferable adversarial examples. However, the transferability of adversarial examples is not always guaranteed. Moreover, current methods of crafting adversarial examples only utilize simple pixel space metrics which neglect semantics in the whole images, and thus generate unnatural adversarial examples. To address these problems, we propose an advRL-GAN framework to directly generate semantically natural adversarial examples in the black-box setting, bypassing the transferability requirement of adversarial examples. It formalizes the black-box attack as a reinforcement learning (RL) agent, which explores natural and aggressive adversarial examples with generative adversarial networks and the feedback of target agents. To the best of our knowledge, it is the first RL-based adversarial attack on a deep RL agent. Experimental results on multiple environments demonstrate the effectiveness of advRL-GAN in terms of reward reductions and magnitudes of perturbations, and validate the sparse and targeted property of adversarial perturbations through visualization.

Introduction Video

Sessions where this paper appears

-

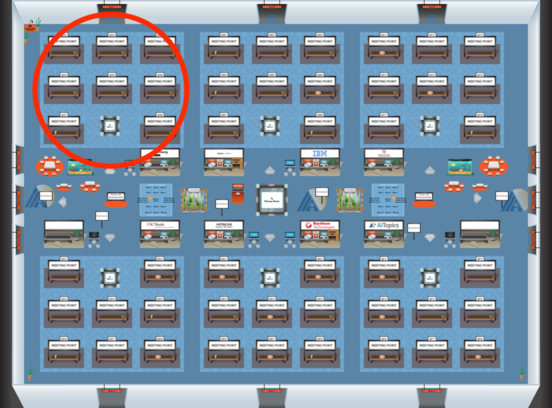

Poster Session 6

Sat, February 26 8:45 AM - 10:30 AM (+00:00)

Sat, February 26 8:45 AM - 10:30 AM (+00:00)

Blue 1

Blue 1

-

Poster Session 12

Mon, February 28 8:45 AM - 10:30 AM (+00:00)

Mon, February 28 8:45 AM - 10:30 AM (+00:00)

Blue 1

Blue 1

-

Oral Session 6

Sat, February 26 10:30 AM - 11:45 AM (+00:00)

Sat, February 26 10:30 AM - 11:45 AM (+00:00)

Blue 1

Blue 1