DeepStochLog: Neural Stochastic Logic Programming

Thomas Winters, Giuseppe Marra, Robin Manhaeve, Luc De Raedt

[AAAI-22] Main Track

Abstract:

Recent advances in neural symbolic learning, such as DeepProbLog, extend probabilistic logic programs with neural predicates. Like graphical models, these probabilistic logic programs define a probability distribution over possible worlds, for which inference is computationally hard. We propose DeepStochLog, an alternative neural symbolic framework based on stochastic definite clause grammars, a type of stochastic logic program, which defines a probability distribution over possible derivations. More specifically, we introduce neural grammar rules into stochastic definite clause grammars to create a framework that can be trained end-to-end. We show that inference and learning in neural stochastic logic programming scale much better than for neural probabilistic logic programs. Furthermore, the experimental evaluation shows that DeepStochLog achieves state-of-the-art results on challenging neural symbolic learning tasks.

Introduction Video

Sessions where this paper appears

-

Poster Session 6

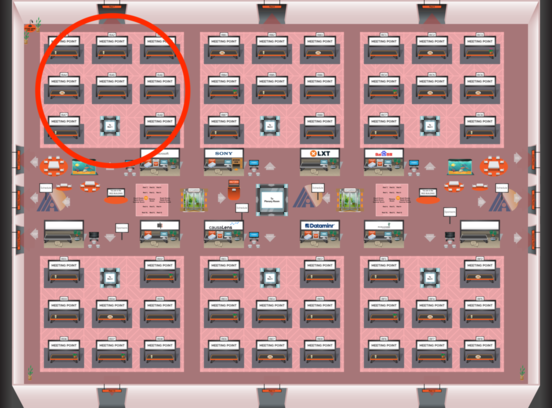

Red 1

Red 1

-

Poster Session 10

Red 1

Red 1