Can Vision Transformers Learn without Natural Images?

Kodai Nakashima, Hirokatsu Kataoka, Asato Matsumoto, Kenji Iwata, Nakamasa Inoue, Yutaka Satoh

[AAAI-22] Main Track

Abstract:

Is it possible to complete Vision Transformer (ViT) pre-training without natural images and human-annotated labels? This question has become increasingly relevant in recent months because while current ViT pre-training tends to rely heavily on a large number of natural images and human-annotated labels, the recent use of natural images has resulted in problems related to privacy violation, inadequate fairness protection, and the need for labor-intensive annotations. In this paper, we experimentally verify that the results of formula-driven supervised learning (FDSL) framework are comparable with, and can even partially outperform, sophisticated self-supervised learning (SSL) methods like SimCLRv2 and MoCov2 without using any natural images in the pre-training phase. We also consider ways to reorganize FractalDB generation based on our tentative conclusion that there is room for configuration improvements in the iterated function system (IFS) parameter settings of such databases. Moreover, we show that while ViTs pre-trained without natural images produce visualizations that are somewhat different from ImageNet pre-trained ViTs, they can still interpret natural image datasets to a large extent. Finally, in experiments using the CIFAR-10 dataset, we show that our model achieved a performance rate of 97.8, which is comparable to the rate of 97.4 achieved with SimCLRv2 and 98.0 achieved with ImageNet.

Introduction Video

Sessions where this paper appears

-

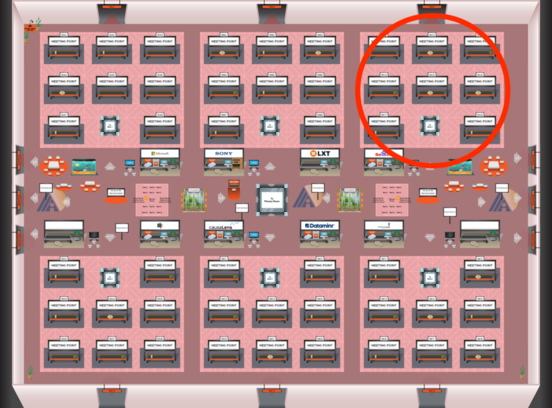

Poster Session 1

Red 3

Red 3

-

Poster Session 11

Red 3

Red 3