Attribute-based Progressive Fusion Network for RGBT Tracking

Yun Xiao, Mengmeng Yang, Chenglong Li, Lei Liu, Jin Tang

[AAAI-22] Main Track

Abstract:

RGBT tracking usually suffers from various challenge factors, such as fast motion, scale variation, illumination variation, thermal crossover and occlusion, to name a few. Existing works often study fusion models to solve all challenges simultaneously, and it requires fusion models complex enough and training data large enough, which are usually difficult to be constructed in real-world scenarios. In this work, we disentangle the fusion process via the challenge attributes, and thus propose a novel Attribute-based Progressive Fusion Network (APFNet) to increase the fusion capacity with a small number of parameters while reducing the dependence on large-scale training data. In particular, we design five attribute-specific fusion branches to integrate RGB and thermal features under the challenges of thermal crossover, illumination variation, scale variation, occlusion and fast motion respectively. By disentangling the fusion process, we can use a small number of parameters for each branch to achieve robust fusion of different modalities and train each branch using the small training subset with the corresponding attribute annotation. Then, to adaptive fuse features of all branches, we design an aggregation fusion module based on SKNet. Finally, we also design an enhancement fusion transformer to strengthen the aggregated feature and modality-specific features. Experimental results on benchmark datasets demonstrate the effectiveness of our APFNet against other state-of-the-art methods.

Introduction Video

Sessions where this paper appears

-

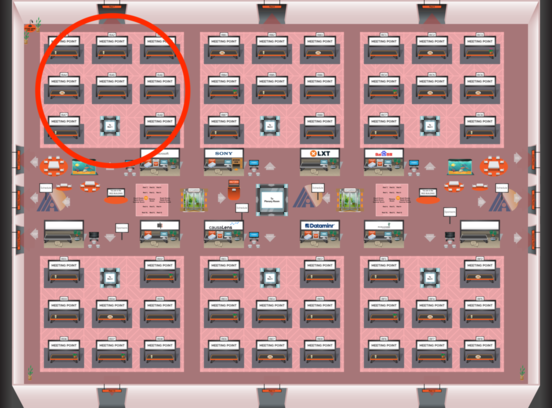

Poster Session 5

Red 1

Red 1

-

Poster Session 9

Red 1

Red 1