Gender and Racial Stereotype Detection in Legal Opinion Word Embeddings

Sean Matthews, John Hudzina, Dawn Sepehr

[AAAI-22] AI for Social Impact Track

Abstract:

Studies have shown that some Natural Language Processing (NLP) systems encode and replicate harmful biases with potential adverse ethical effects in our society. In this article, we propose an approach for identifying gender and racial stereotypes in word embeddings trained on judicial opinions from U.S. case law. Embeddings containing stereotype information may cause harm when used by downstream systems for classification, information extraction, question answering, or other machine learning systems used to build legal research tools. We first explain how previously proposed methods for identifying these biases are not well suited for use with word embeddings trained on legal opinion text. We then propose a domain adapted method for identifying gender and racial biases in the legal domain. Our analyses using these methods suggest that racial and gender biases are encoded into word embeddings trained on legal opinions. These biases are not mitigated by exclusion of historical data, and appear across multiple large topical areas of the law. Implications for downstream systems that use legal opinion word embeddings and suggestions for potential mitigation strategies based on our observations are also discussed.

Introduction Video

Sessions where this paper appears

-

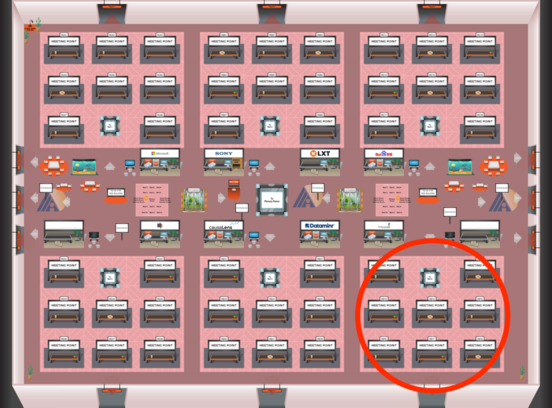

Poster Session 2

Fri, February 25 12:45 AM - 2:30 AM (+00:00)

Fri, February 25 12:45 AM - 2:30 AM (+00:00)

Red 6

Red 6

-

Poster Session 5

Sat, February 26 12:45 AM - 2:30 AM (+00:00)

Sat, February 26 12:45 AM - 2:30 AM (+00:00)

Red 6

Red 6

-

Oral Session 2

Fri, February 25 2:30 AM - 3:45 AM (+00:00)

Fri, February 25 2:30 AM - 3:45 AM (+00:00)

Red 6

Red 6